If this time is high, it could indicate a problem with your DAG definitions. Tasks can be in states such as running, success, failed, or queued.ĭAG Processing: This metric measures the time taken to process each Directed Acyclic Graph (DAG). Task State: This metric provides information about the state of each task. This can include information such as the task's state, start time, and end time. Task Instance Context: This metric provides information about the context in which a task instance is running. It can help identify tasks that are taking longer than expected to run. Task Duration: This metric measures the time taken to execute each task.

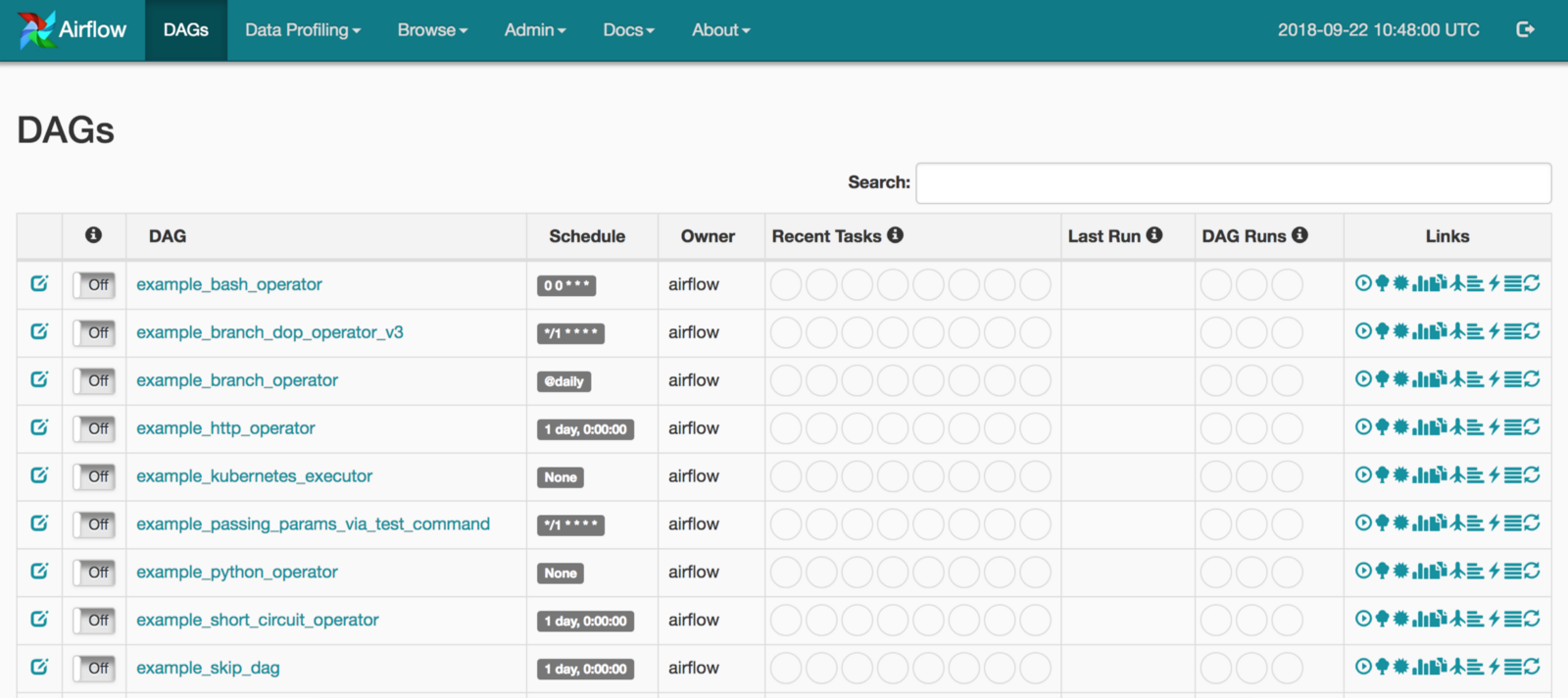

Here are some of the key metrics you can gather from the Airflow web server: These metrics can be gathered using the StatsD/Prometheus metrics for Airflow, as mentioned in the context provided. The Airflow web server provides several metrics that can be used to monitor the health and performance of your Airflow instance. The Airflow web server is a crucial component of this platform, providing a user interface for managing and monitoring Airflow workflows. You can do this by configuring access controls on your logging service or by encrypting the logs.Īpache Airflow is a platform that allows you to programmatically author, schedule, and monitor workflows. Please note that the logs generated by Airflow workers can contain sensitive information, so it's important to secure access to them. Remember to replace localhost with your actual ElasticSearch host.įor more information on configuring remote logging in Airflow, refer to the official Airflow documentation.

Json_fields = asctime, filename, lineno, levelname, message This is particularly useful when you are running Airflow in a distributed environment with multiple workers.įor instance, to enable remote logging to ElasticSearch, you would need to set the following in your airflow.cfg: In addition to local logging, Airflow also supports remote logging to various services such as Amazon S3, Google Cloud Storage, ElasticSearch, and others. Here is an example of how to access a log file for a specific task: log_file = open('/path/to/airflow/logs/dag_id/task_id/execution_date.log') Each task has its own log file, which is named after the task instance's dag_id, task_id, and execution_date. The logs are located in the AIRFLOW_HOME/logs directory. These logs are crucial for debugging and monitoring the performance of your Airflow tasks.īy default, Airflow stores logs locally on the machine where the tasks are run. FootnotesĪpache Airflow workers can generate logs that provide detailed information about the tasks they execute. This can be configured in the airflow.cfg file under the section 1.įor more information about logging in Apache Airflow, you can refer to the official Apache Airflow documentation 2. In addition to the local file system, Airflow also supports remote logging to services like Elasticsearch, Google Cloud Storage, and Amazon S3. To change the log file location, you can modify the airflow.cfg file as follows:

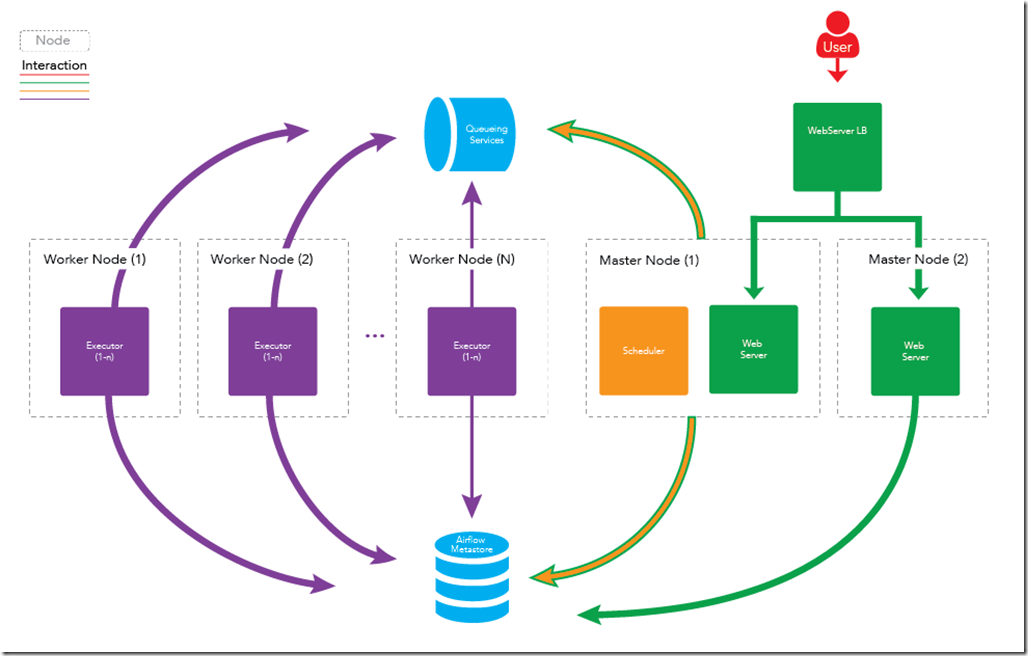

For example, to set the logging level to DEBUG, you can modify the airflow.cfg file as follows: You can configure the logging level and the log file location in the airflow.cfg file. The naming convention for the log files is -MM-DD, where YYYY-MM-DD is the date when the logs were generated. Information about the DAGs (Directed Acyclic Graphs) that are being processedīy default, these logs are stored in the AIRFLOW_HOME/logs/scheduler directory.Errors or exceptions that occur during the scheduling or execution of tasks.The time when tasks are scheduled and executed.The status of the tasks (whether they are running, succeeded, or failed).The Scheduler logs primarily include information about: It generates logs that provide valuable insights into its operations and can be crucial for debugging and monitoring. The Apache Airflow Scheduler is a key component of the Airflow architecture, responsible for scheduling and triggering tasks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed